Newsroom

Human brains are the best illustration of high flexibility, which can not only keep learning in new environment, but also adjust behaviors to different contexts. The current deep neural networks (DNNs) lack abilities of both continual learning and contextual-dependent learning, resulting in insufficient flexibility to work in complex situations. These constitute a significant gap in the capabilities between the current DNNs and primate brains.

Researchers from the Institute of Automation of the Chinese Academy of Sciences proposed a novel learning algorithm, namely orthogonal weights modification, with the addition of a context-dependent processing module. It equipped the artificial neural networks with powerful abilities for continual learning and contextual-dependent learning, and overcame catastrophic forgetting.

As a result, neural networks could progressively learn various mapping rules in a context- dependent way. This study, published in Nature Machine Intelligence, sheds lights on building artificial intelligence systems with high level of flexibility.

In addition, by using the CDP module to enable contextual information to modulate the representation of sensory features, a network can learn different, context-specific mappings for even identical stimuli, which further enhances its flexibility and adaptability.

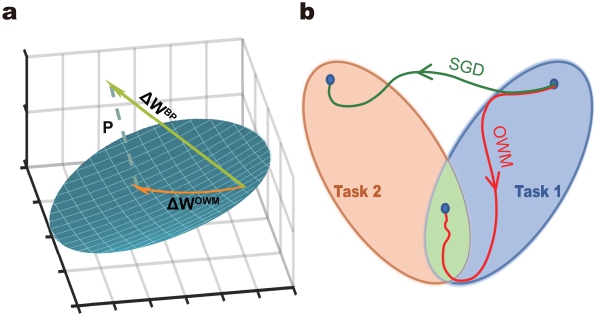

The core of OWM algorithm is: when training a network for new tasks, its weights can only be modified in the direction orthogonal to the subspace spanned by all previously learned inputs. This ensures that new learning processes do not interfere with previously learned tasks, as weight changes in the network as a whole do not interact with old inputs.

Consequently, combined with a gradient descent-based search, the OWM helps the network to find a weight configuration that can accomplish new task while ensuring the performance of learned tasks remains unchanged. Importantly, it is fully compatible with the present gradient back propagation algorithm, which makes it widely applicable.

Schematic of OWM (Image by CASIA)

What’s more, OWM exhibits significant performance improvement over other methods of continual learning, especially as the number of learning tasks increases. It is found that a classifier trained with the OWM could learn to recognize more than 3000 Chinese characters sequentially.

Although a system that can learn many different mapping rules in an online and sequential manner is highly desirable, such a system cannot accomplish context-dependent learning by itself. To achieve that, contextual information needs to interact with sensory information properly.

In the study, the researchers adopted a solution inspired by the prefrontal cortex (PFC). The PFC receives sensory inputs as well as contextual information, which enables it to choose sensory features most relevant to the present task to guide action.

After combining CDP module with OWM algorithm, the system can sequentially learn 40 different, context-specific mapping rules with a single classifier. The accuracy is very close to that achieves by multi-task training with 40 classifiers.

The orthogonal weights modification can overcome catastrophic forgetting, and the context-dependent processing module enables a single network acquiring numerous context-dependent mapping rules in an online and continual manner. By combining OWM with CDP, the intelligent agents could apply continual learning to adjust to the complex and dynamic environments, and finally reaching a higher level of intelligence.